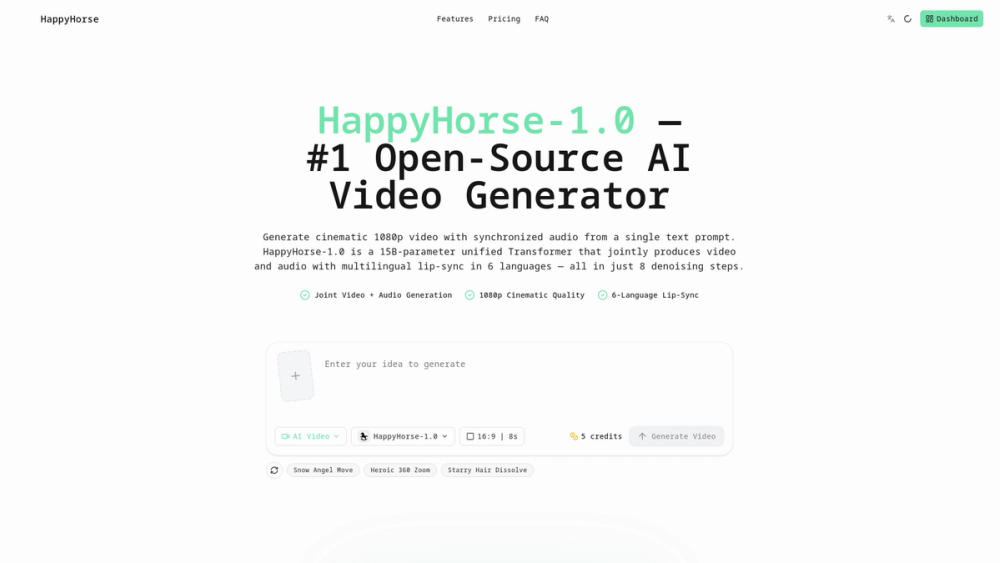

HappyHorse 1.0 is a state-of-the-art, open-source AI video generation model designed to streamline the production of cinematic content. By utilizing a 15-billion-parameter unified Transformer architecture, it enables the simultaneous generation of high-definition 1080p video and perfectly synchronized audio from simple text or image prompts.

Key Features

- Unified Audio-Video Generation: Eliminates the need for post-production dubbing by generating dialogue, ambient sound, and Foley effects directly alongside video frames.

- Multilingual Lip-Sync: Natively supports six languages—Chinese, English, Japanese, Korean, German, and French—with expressive facial performance and accurate phonetic coordination.

- Ultra-Fast Denoising: Achieves high-quality, cinematic output in just 8 denoising steps, significantly reducing generation time compared to traditional diffusion models.

- Image-to-Video Synthesis: Transforms static reference images into dynamic, animated scenes with intelligent motion synthesis and realistic body movement.

- Open-Source Architecture: Provides a transparent and flexible platform, making it an ideal choice for developers and creators who require deep control over their video production workflows.

- High-Fidelity 1080p Output: Delivers professional-grade visual quality suitable for film, marketing, and educational applications.