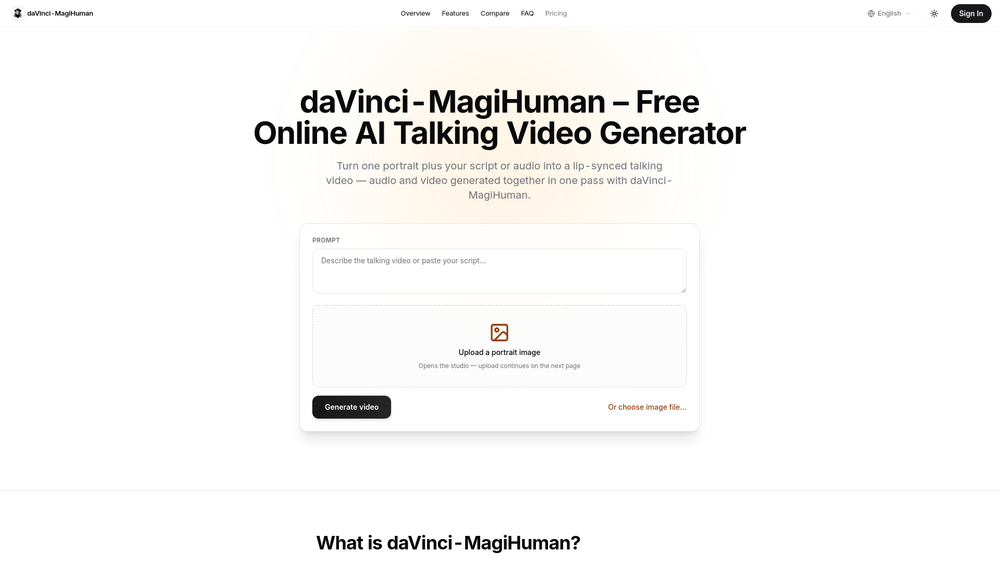

daVinci-MagiHuman is a powerful, open-source AI model that enables users to generate realistic, lip-synced talking videos from a single portrait photograph. Developed by Sand.ai and the GAIR Lab at Shanghai Jiao Tong University, this 15B-parameter model streamlines the video creation process by using a unified Transformer architecture to generate audio and video tokens simultaneously. By eliminating the need for complex, multi-stage pipelines, daVinci-MagiHuman provides a fast and efficient solution for creating high-quality talking avatars.

Key Features

- Unified Audio-Video Generation: The model jointly processes audio and video tokens in a single pass, ensuring perfect synchronization between speech and lip movement.

- Single-Photo Input: Transform any clear, front-facing portrait into a dynamic talking head with minimal setup.

- Open-Source Flexibility: Released under the Apache 2.0 license, the model weights and code are available for inspection, local deployment, and commercial use.

- High-Performance Inference: Optimized for speed, the model can generate short, high-quality clips in seconds on modern hardware like the NVIDIA H100.

- State-of-the-Art Quality: Benchmarks show superior word-error rates and higher human preference scores compared to many existing public baselines.

- Multilingual Support: The model is capable of handling multiple languages, making it a versatile tool for global content creation.